Proven Results

Deployment (Cloud / Edge)

Delivering AI systems reliably across cloud, edge, and hybrid environments.

Why This Capability Exists

An AI system is only valuable if it runs where the decision happens.

Many AI initiatives fail not because models are wrong — but because deployment environments are mismatched, latency is ignored, or systems can’t adapt across infrastructure boundaries.

This capability ensures AI systems are deployed intentionally, not opportunistically.

The Outcome

- Stable AI performance across cloud, edge, and hybrid setups

- Reduced latency for real-time or near-real-time decisions

- Infrastructure that scales without architectural rewrites

Used When

Deploying AI systems across multiple environments

Supporting real-time or low-latency inference

Operating in bandwidth-constrained or offline scenarios

Balancing cost, performance, and reliability

How This Fits into Our Services

This capability supports and strengthens our core services:

Generative AI Solutions

AI Agents & Multi- Agent Systems

Predictive Analytics & ML Systems

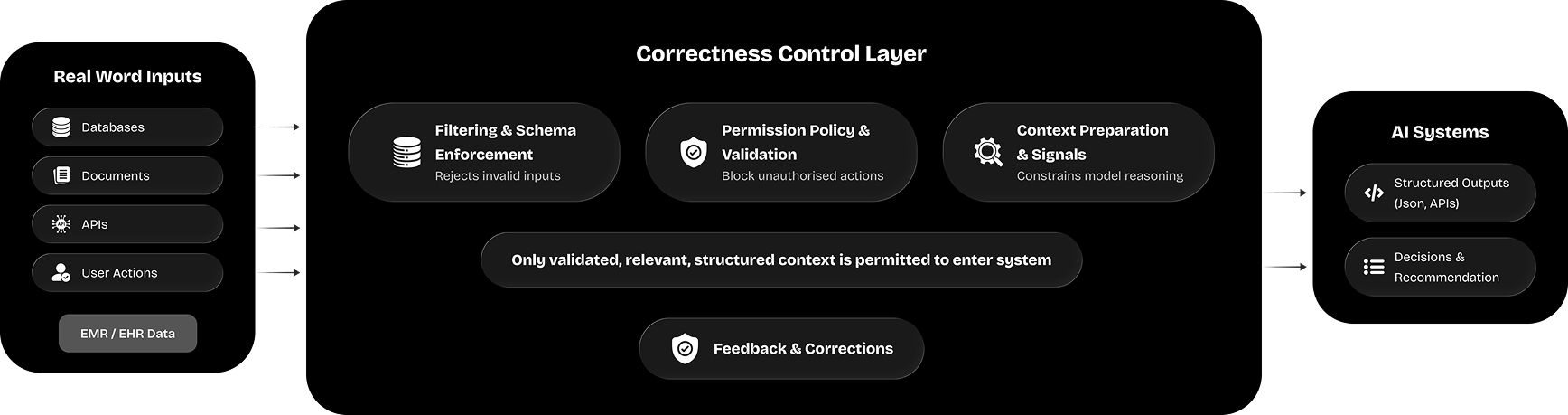

Architectural Role

Where correctness is controlled

How We Approach This Work

Environment-aware deployment

strategies, not one-size-fits-all

Designed for resilience under

real-world constraints

Designed for resilience under

real-world constraints

See how this powers our AI systems

View relevant case

studies

studies

We are Microsoft Certified

Speak Directly With the

People Who Build

Company

About Us

Our Work

Insights

Resources

Careers

Contact Us

How We Work

Media Gallery

+1.svg) D 908 - PNTC, Times Of India Press Rd, Prahlad Nagar, Ahmedabad, Gujarat - 380015, India

D 908 - PNTC, Times Of India Press Rd, Prahlad Nagar, Ahmedabad, Gujarat - 380015, India Langenthalstrasse 8A, 4912 Lotzwil, Switzerland.

Langenthalstrasse 8A, 4912 Lotzwil, Switzerland. 1204 Al Manara Tower, Business Bay Dubai, United Arab Emirates

1204 Al Manara Tower, Business Bay Dubai, United Arab Emirates© 2026 CIZO. All rights reserved.

Privacy Policy

Built with enterprise standards for security, data handling, and delivery.

Built with enterprise standards for security, data handling, and delivery. Partnered with & recognized by global technology platforms.

Partnered with & recognized by global technology platforms.